In Cambridge, again, yesterday for a workshop on autonomous flight. We somehow got onto autonomous vehicles on the ground and there was a comment from one participant that I think is worth sharing.

Essentially, it would be OK to hail an autonomous (self-driving) taxi/pod/whatever (with or without a driver/ attendant), but NOT OK if this were to contain another passenger in the back (someone they didn’t know). So, trust in an autonomous vehicle was 100%, but trust in an anonymous person was 0%. This was said by someone in their twenties and might be representative of generational attitudes towards trust. It could also be related to the various scandals surrounding Uber quite recently.

Reminds me of a comment by an elderly man who lived in a huge house in the middle of a vast estate He didn’t like walking in his own grounds, which were open to the public, because he might bump into someone that he hadn’t been introduced to.

The other thing that caught my attention yesterday, was a sign in the ‘window’ of Microsoft Research that said simply “Creating new realities”. Can you have more than one reality?

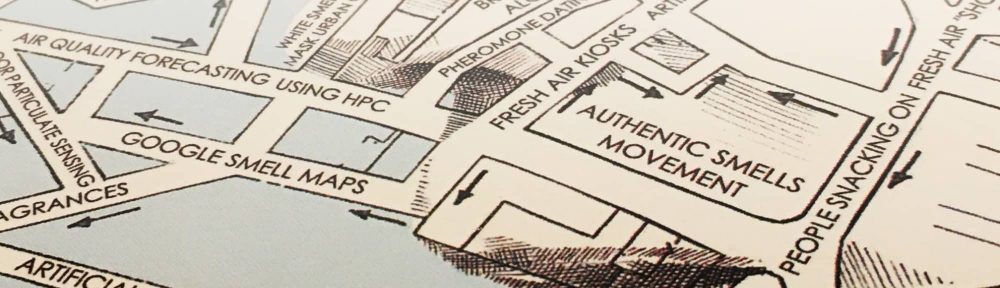

Is reality fixed? A mountain, for example, is there whether you want it to be or not. Or can you create new realities by overlaying virtual data, for instance?

My initial reaction was that there is only one authentic reality, but then I realised this was nonsense in a sense. How I perceive things will be different to how you perceive things but, more fundamentally, how a dog, or a bee, sees things is different to how humans see things. Butterflies, for instance, see using the ultra-violet wavelength. Humans see using the visible light spectrum (I think that’s correct, correct me if it’s not!).

Just because we see a flower as yellow, doesn’t necessarily mean the flower is yellow for other animals. And there’s smell of course, which can vary significantly between animals.

And this all relates to AI ethics…because what I think is ethical isn’t necessarily the same as what you think is ethical and differences can be magnified when you start dealing with countries and cultures. For instance, what China sees as ethical behaviour, for a drone or autonomous passenger vehicle, will be different to how the US sees it. And you thought Brexit was complicated.